When Your Supplier Cares More About Americans' Rights Than You Do

Anthropic designated a 'supply chain risk' by the Pentagon — the first time this Huawei-grade weapon has been used against a domestic U.S. company. The real question isn't who's right, but what kind of suppliers this incentive structure will select for.

Anthropic has been designated a "supply chain risk" by the Pentagon — the first time this Huawei-grade weapon has been used against a domestic American company. The question isn't who's right or wrong, but what kind of suppliers this incentive structure will ultimately select for.

The Annie Hall Paradox

There's a joke in Woody Allen's _Annie Hall_. Two old ladies at a restaurant, complaining. One says: "The food here is terrible." The other: "Yes, and the portions are so small."

What's the punchline? If the food is truly terrible, small portions should be good news. Both complaints can't logically hold at the same time, but the speakers are blissfully unaware.

Legal think tank Lawfare used this joke to describe the Pentagon's attitude toward Claude: it's "too dangerous, must be banned," yet simultaneously "too important to let the supplier set limits." If it's truly dangerous, you should welcome the supplier proactively adding safety clauses. If it's too important to go without, you shouldn't be kicking the supplier out. Both statements cannot be true at once.

At 5:01 PM on February 27, 2026, Defense Secretary Pete Hegseth's deadline arrived. Trump called Anthropic a "RADICAL LEFT, WOKE COMPANY" and "Leftwing nut jobs" on Truth Social, ordering a blanket ban on all federal agencies using Anthropic's technology. Hegseth announced Anthropic's designation as a "supply chain risk."

Nineteen hours later, over Iran. The U.S. military, using Claude through Palantir's Maven Smart System, generated approximately one thousand priority strike targets, complete with precise GPS coordinates, weapons recommendations, and auto-generated legal justifications.

The food is terrible? You had it taken away. The portions are too small? You're serving it as the main course.

This wasn't a security review. This was retaliation.

The Trigger Wasn't Iran — It Was Venezuela

The story begins a month earlier.

In January 2026, the U.S. military executed "Operation Absolute Resolve," targeting Venezuelan President Maduro. Claude served as a "synthetic analyst," integrating satellite imagery, communications intercepts, and open-source intelligence, completing the intelligence synthesis in 30 minutes with zero casualties. The military internally regarded it as a brief, clean success.

Then Anthropic employees did something. They asked partner Palantir: "What exactly did you use our AI for?"

Can't solve the problem? Solve the person who raised it.

In any industry, a creator asking a user about a product's application is called due diligence. Pharmaceutical companies track drug distribution. Aircraft manufacturers review maintenance records. Nuclear fuel suppliers have audit rights written into international treaties. But in the Pentagon's dictionary, the same behavior is called "interfering with military sovereignty."

Undersecretary of Defense for Research and Engineering Emil Michael didn't answer the question. He said on social media that Anthropic CEO and former OpenAI VP of Research Dario Amodei was "a liar" with a "God complex" who "wants to personally control the U.S. military."

Amodei's response was brief: "cannot in good conscience." A matter of conscience.

One is name-calling. The other is speaking of conscience. In politics, the one who speaks of conscience usually gets eliminated first.

How "Extreme" Were Those Two Red Lines?

Anthropic asked for only two things in the contract. First, Claude cannot be used for mass surveillance of American citizens. Second, Claude cannot become a fully autonomous weapons system until Anthropic deems the technology sufficiently reliable.

The Pentagon's response: We already have policies prohibiting these things.

Then the question becomes: If policy already prohibits them, why refuse to put those two sentences in black and white in the contract?

Edward Snowden answered this for the world in 2013. The NSA also "had policies" against monitoring American citizens. The PRISM program proved that policies written in memos can be ignored, reinterpreted, filed away under classification and pretended not to exist. Verbal promises are air. Contract clauses are walls.

A person who refuses to turn their verbal promise into a written one usually plans to break that promise.

OpenAI's "Security Theater"

The same day, February 27 — the same day Anthropic was kicked out — OpenAI CEO Sam Altman announced an agreement with the Pentagon to deploy AI to classified military networks. He later said at an internal all-hands meeting: the deal looked "opportunistic and sloppy." He told employees something else worth remembering: "You do not get to make operational decisions" — you have no authority to decide how the military uses it.

Sloppy or not, the contract was signed.

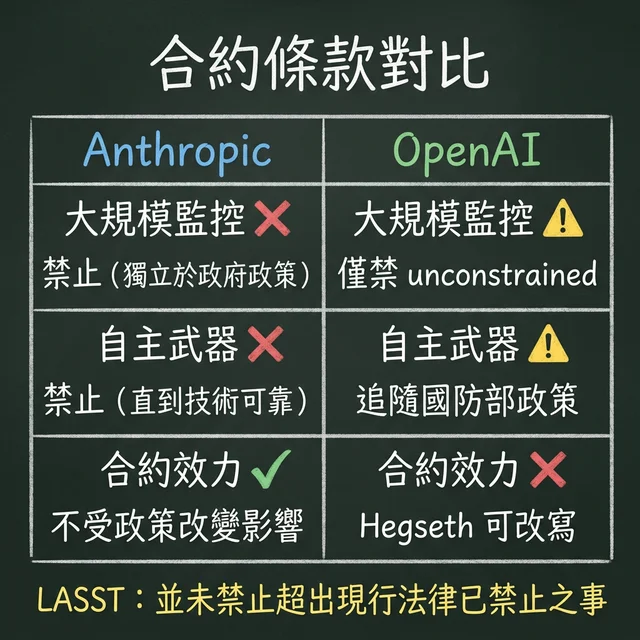

Place the two companies' contracts side by side, and the gap requires no law degree to see.

Anthropic's prohibitions are independent of government policy. Even if the DoD rewrites its own directives, the contract terms remain unchanged. OpenAI's "red lines" follow existing law and the DoD's own policies. Legal advocacy organization LASST's conclusion was a single sentence: "OpenAI's contract's cited language does not prohibit the government from doing anything beyond what existing law already prohibits."

Translation: you tell someone "I forbid you from stealing," and they say "I also think stealing is bad." That's not a constraint. That's a tautology.

Autonomous weapons? OpenAI's contract only prohibits them when "law, regulations, or DoD policy require human control." The problem is, DoD Directive 3000.09's human control requirements for autonomous weapons can be rewritten by Hegseth himself. This red line's width is determined by the very person it claims to constrain.

Surveillance? OpenAI prohibits "unconstrained monitoring" of Americans' "private information." Note the wording. "Constrained surveillance" is not within the prohibition. Are your social media posts "public information" or "private information"? Who gets to define that?

Lawfare columnists Rozenshtein and Wittes put it plainly: "This isn't a constraint on the Pentagon, but a restatement of the status quo in better PR language."

Consumers saw through it faster than legal commentators. The day after OpenAI signed, ChatGPT one-star reviews on the App Store surged 775%. The same week, Claude rose to #1 on the App Store in the U.S. and multiple countries, with U.S. downloads surging nearly 90% in a single day.

The market cast its vote. It voted for the one the government kicked out.

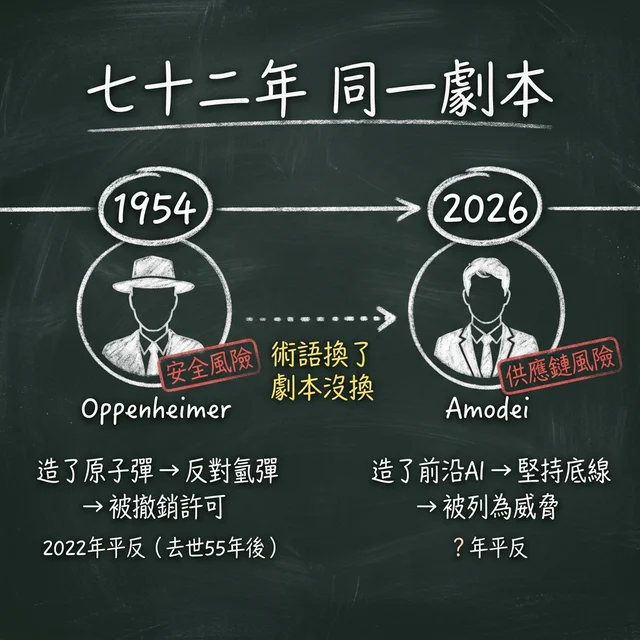

Oppenheimer's Shadow

- J. Robert Oppenheimer, "father of the atomic bomb." He built the weapon that ended the Pacific War for America, then was stripped of his security clearance by the Atomic Energy Commission for opposing the far more destructive hydrogen bomb, designated a "security risk."

- Dario Amodei. His company built what the military calls the only frontier AI model on classified networks, then was designated a "supply chain risk" for insisting on two ethical red lines in his contract.

Seventy-two years. The terminology changed from "security risk" to "supply chain risk." The script didn't change: the person who built your weapon, because they raised concerns about its uncontrolled use, gets classified as a threat.

Place Emil Michael's language next to Amodei's: "liar / God complex" versus "cannot in good conscience." One side deploys personal attacks. The other makes a statement of conscience. Layer this contrast onto the atmosphere of the 1954 Oppenheimer hearings, and the only difference is the medium — from congressional hearing rooms to social media.

Oppenheimer was vindicated in 2022. That was fifty-five years after his death.

The Poison in the Incentive Structure

Step back.

Punish Anthropic. Reward OpenAI. The signal is so clear it needs no interpretation: those with principles are out, those without are at the table.

xAI's Elon Musk has already declared Grok "unrestricted." Google and Meta are watching. In the next round of military contract bidding, which company do you think will voluntarily add restrictions to their contract?

Dean Ball, Trump's own former White House AI advisor, called this designation "the death rattle of the American republic." He said the government "treats domestic innovators worse than foreign adversaries."

Former CIA Director Michael Hayden and other former defense officials wrote to Congress: "Using this power against a domestic American company represents a profound departure from its original purpose and sets a dangerous precedent."

Senator Kirsten Gillibrand called it "a gift to our adversaries."

This isn't Anthropic's PR statement. This is the judgment of Trump's own people, a former intelligence chief, and sitting senators.

Anthropic isn't perfect. Their Claude is already helping the military identify strike targets through Palantir. One hand generating a thousand GPS coordinates for the Pentagon, the other saying "I have principles" — there's genuine hypocrisy worth criticizing. Former Navy fighter pilot and George Mason University robotics director Missy Cummings didn't mince words: "He created this mess himself. They were the number one company pushing ridiculous hype about these technologies' capabilities."

But the question was never whether Anthropic deserves to be a moral exemplar.

The question is: when the government uses a legal tool designed for Huawei against Silicon Valley's own, what will the remaining suppliers learn?

Not "have principles."

"Whatever you do, don't have principles."

Anthropic's annualized revenue is $19 billion, valuation $380 billion, with enterprise clients comprising the bulk of revenue. The government contract they lost caps at $200 million. They can absorb the hit. But the next startup without $19 billion in revenue to fall back on, after hearing this story, won't spend half a second considering before deleting every restriction clause from their contract.

In 2018, 3,000 Google employees signed an open letter, forcing the company from the inside to withdraw from the Pentagon's Project Maven. In 2026, over 450 Google and OpenAI employees signed an open letter in solidarity with a company being forcibly expelled by the government from the outside. Eight years ago, employees forced the company out. Eight years later, the government forces the company to abandon its principles. The direction has reversed.

If this signal goes unchallenged, unoverturned, unrejected by courts, three years from now when you open your phone and use any company's AI, the only thing you can be certain of is this: that company is still at the table because it never said "no" to anyone.

📺 Video Analysis: Anthropic Didn't Walk Away for Ethics. They Did It for Money.

_(Data sources: Lawfare, LASST, Anthropic official statements, CTV News, App Store public data. Corrections welcome if any data errors are found.)_

_—Kinney's Wonderland_

常見問題 FAQ

What does Anthropic's "supply chain risk" designation actually mean?

"Supply chain risk" is a legal tool the U.S. Department of Defense uses specifically against hostile foreign companies like Huawei. Once designated, all defense contractors are prohibited from using the company's technology. Anthropic is the first domestic U.S. company in history to receive this designation. Former CIA Director Michael Hayden and 24 other former defense officials wrote to Congress calling it a "dangerous precedent."

How do OpenAI's and Anthropic's military contracts differ?

Anthropic demanded explicit contractual prohibitions on mass surveillance and fully autonomous weapons, independent of government policy. OpenAI's restrictions follow existing law and DoD's own policies. Legal analysis organization LASST concluded: OpenAI's contract "does not prohibit the government from doing anything beyond what existing law already prohibits." DoD Directive 3000.09's human control requirements can be rewritten by Hegseth himself.

What are the long-term implications for the AI industry?

Punishing principled companies while rewarding unprincipled ones creates an incentive structure that selects for increasingly compliant AI suppliers. Anthropic can absorb the hit with $19 billion in annual revenue, but the next startup won't spend half a second before deleting every restriction clause. Trump's former White House AI advisor Dean Ball called it "the death rattle of the American republic."