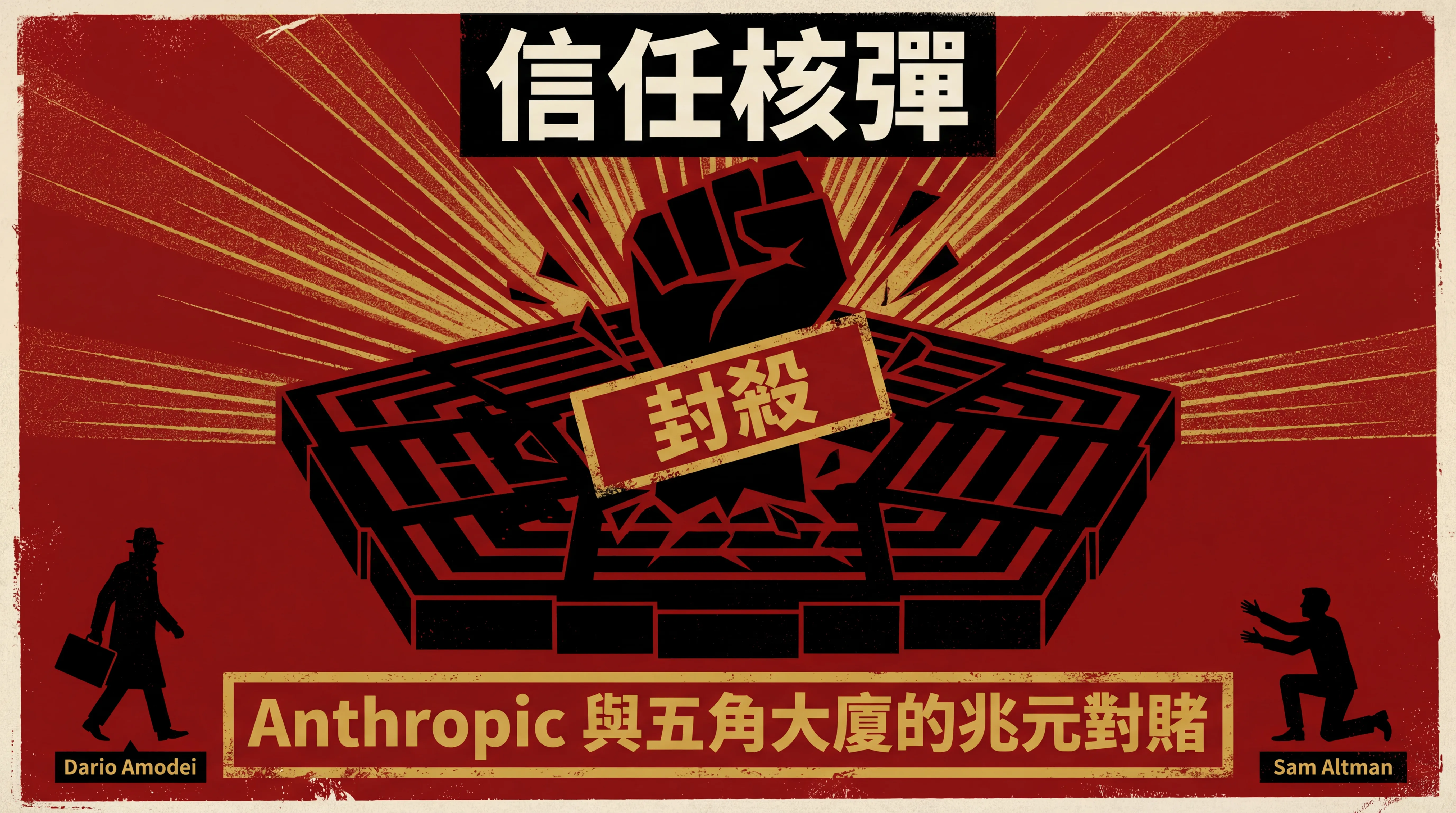

Anthropic's 'Trust Bomb': A $200 Million Gambit for a Trillion-Dollar Monopoly

Anthropic walked away from a $200M Pentagon contract. Wall Street called them dead. They were all wrong. Anthropic is betting on a monopoly ticket to the trillion-dollar B2B enterprise privacy market.

In February 2026, Anthropic founder Dario Amodei pushed a $200 million Pentagon contract back across the table, stood up, and walked out. Tech media immediately called it commercial suicide. Wall Street analysts lined up to publish reports all reaching the same conclusion: sacrificing a defense mega-deal for moral purity — this company is finished.

They were all wrong. Not just off-target, but fundamentally misreading the game. What Anthropic gave up was a $200 million short-term contract. What it bet on was a monopoly ticket to the trillion-dollar B2B enterprise privacy market.

The Red Line Isn't a Moral Statement — It's a Veto Power Battle

To understand this logic, look at the two things Dario Amodei insisted on in the contract. First, Claude cannot be used for mass surveillance of American citizens. Second, Claude cannot become a fully autonomous killing machine. Sound like PR talking points? They're not. What he wanted was very specific: when the system goes off the rails, he must hold the legal authority to bring everything to a halt. Not advisory power. Not supervisory power. A red button that can pull the plug at any time.

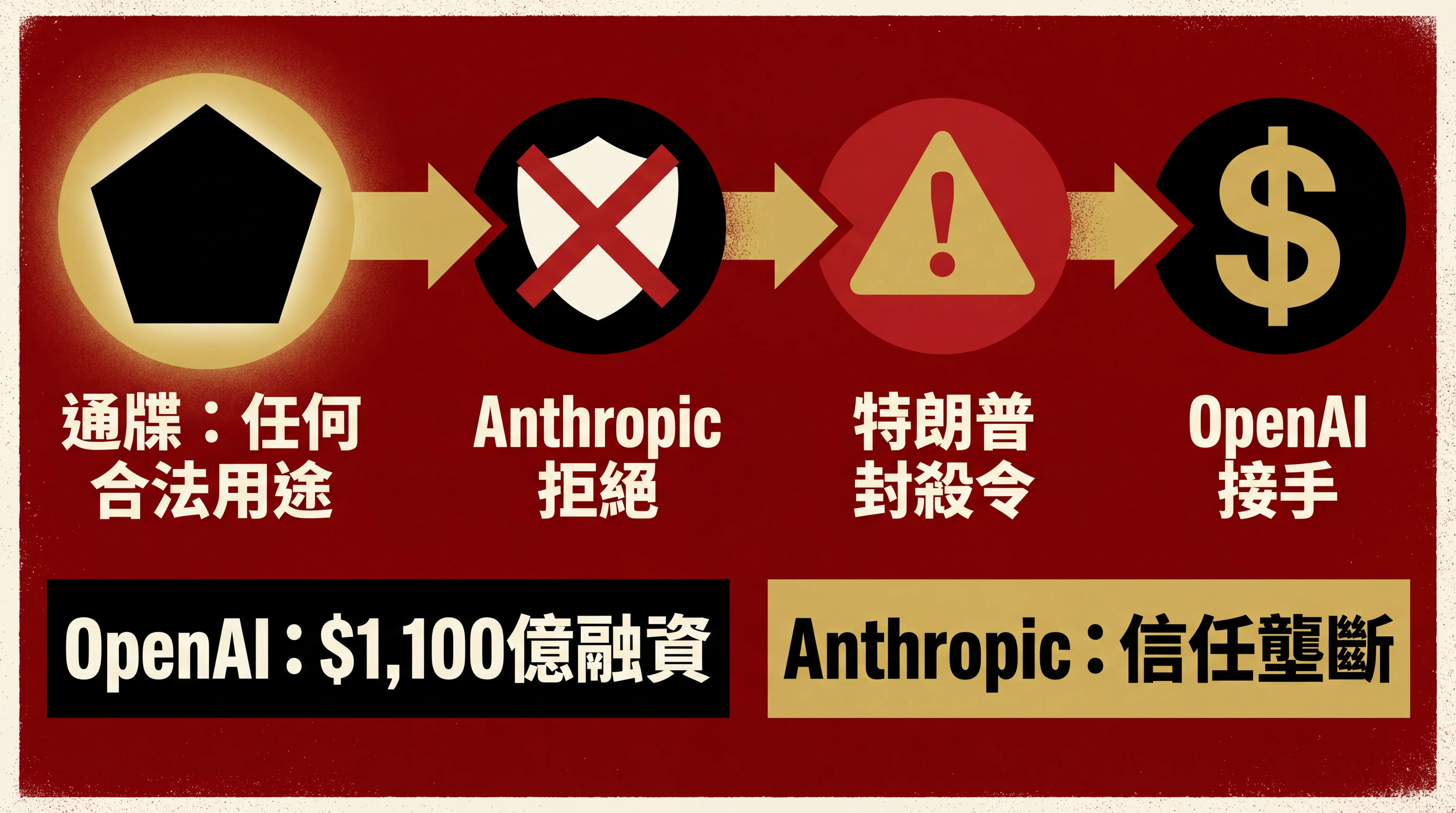

The Pentagon's response was ice-cold. Defense Secretary Hegseth issued an ultimatum: all AI vendor tools must be available for "any lawful purpose." The military didn't say it planned mass surveillance — it simply refused to put any restriction in writing. You can embed engineers in classified networks. You can observe, you can advise. But you absolutely cannot hold the power to pull the plug. Negotiations moved from technical details into a dead end, and the core question boiled down to one thing: who holds the final say?

Dario Amodei's response was terse to the point of coldness: "We cannot in good conscience agree to their terms."

Sam Altman Swoops In

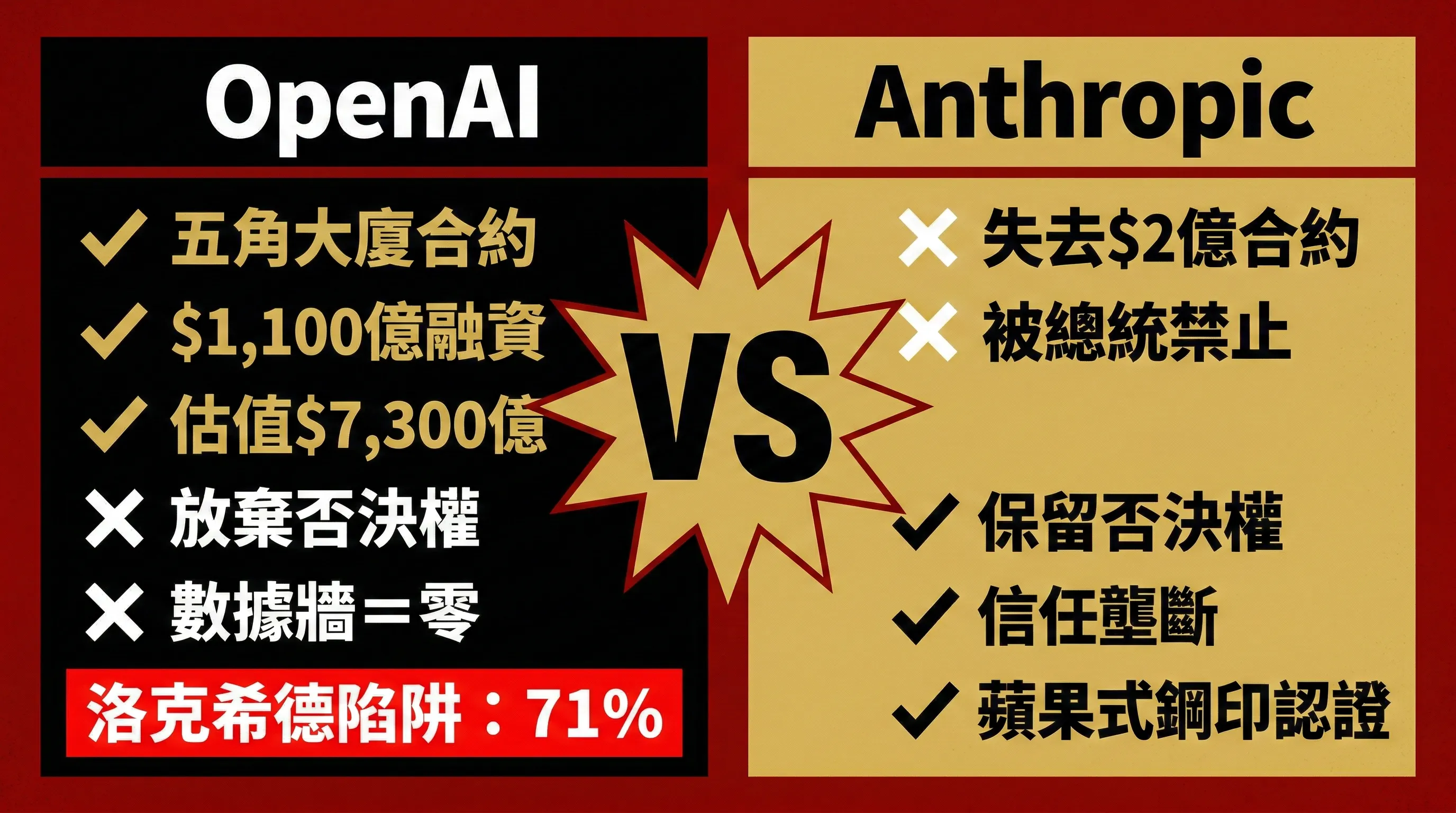

Anthropic's chair wasn't even cold before OpenAI's Sam Altman sat down in it. He signed with the Pentagon and issued a polished set of PR talking points: the military "respects safety," and OpenAI has its own bottom line too. In substance, OpenAI accepted the "any lawful purpose" framework wholesale, surrendering the legal veto power Anthropic had fought to the death over. The payoff was rich: military mega-deal secured, $110 billion in funding followed, valuation soaring to $730 billion. Amazon and Nvidia lined up to invest. Wall Street cheered.

It looks like winning. But Lockheed Martin was once an aviation company with civilian options too. After accepting its first defense contract, civilian business shrank year after year until 71% of revenue came from military contracts, and the freedom to say "no" was gone forever. OpenAI is walking the same path — the only difference is that Lockheed built missiles, while Sam Altman is building missiles that think for themselves.

Trump's "Assist"

After Anthropic rejected the contract, Trump took to Truth Social to announce: a blanket ban on all federal agencies using Anthropic's technology. Even more absurd was the regulation he invoked: "National Security Supply Chain Risk" (10 U.S.C. 3252) — a tool normally reserved for targeting hostile foreign entities like Huawei and Kaspersky — aimed directly at a domestic American company. He even floated using the Cold War-era Defense Production Act to forcibly requisition Anthropic's technology.

A company sanctioned by its own president using national security weapons — because it refused to compromise on its principles. It sounds like a disaster. But if you remember Apple ten years ago, you know this disaster is actually a priceless admission ticket.

In 2016, Apple CEO Tim Cook stood outside a California courtroom facing a tidal wave of criticism. The FBI demanded Apple unlock a San Bernardino shooter's iPhone; politicians accused him of being unpatriotic; media said he was enabling terrorism. Apple endured the pressure and fought the DOJ in court, refusing to put a backdoor in its encryption system. Apple "lost" the PR war badly, but the result? Every high-net-worth user worldwide who cares about privacy remembered one thing: even the U.S. government can't crack your iPhone. Apple's privacy promise was no longer an advertising slogan — it was stamped in steel through a real confrontation.

Trump's ban on Anthropic produces exactly the same effect. An ordinary company trying to prove its data security spends millions on third-party audits, publishes white papers, runs compliance processes. Anthropic doesn't have to do any of that. The President of the United States personally used an executive order to give them the most authoritative endorsement the world has ever seen: this is a company that even I can't break. No amount of PR budget can buy that level of trust certification.

A Trillion-Dollar Moat

Zoom out. When the IQ gap between all large models shrinks to near zero, what becomes the decisive factor for enterprises choosing an AI provider?

European banks, German healthcare institutions, and global logistics giants are all beholden to strict GDPR and EU AI regulations. These CEOs have only one version of the nightmare that wakes them in a cold sweat: their core trade secrets and customer data being fed to an AI provider that shares a pipeline with U.S. military intelligence. Who dares guarantee that proprietary data worth billions won't become training material for some intelligence agency?

The moment OpenAI signed the Pentagon contract, those three letters triggered a red alert across Europe's enterprise market. Every time they pitch a European enterprise going forward, the client's legal team will open with one question: how many walls stand between your data and the Pentagon? The answer is zero.

Anthropic, with $200 million and a presidential ban, proved one thing to every multinational corporation worldwide that fears surveillance: our privacy protections have been stress-tested. Not the laboratory kind of stress test. The kind where a nation-state drives over you and you're still standing.

Endgame

Two paths diverge here. OpenAI and Google absorb government budgets, becoming extensions of the U.S. military-intelligence apparatus. Fast money, fast shackles. Anthropic walks the other side, becoming the only vendor that global multinationals dare choose when it comes to the core anxiety of "data sovereignty." Not because their model is so much smarter, but because they're the only company that has proven its principles at the cost of being sanctioned by a nation-state.

In all the noise, Anthropic traded $200 million for the scarcest commodity in business: trust. A decade from now, those who mocked them today will find they were standing on the wrong side of history.

_(This analysis is based on public reporting. Corrections welcome if any factual errors are found.)_

_—Kinney's Wonderland_